There is no global AI ethics code yet, but many regulations are in development worldwide to control the use of AI.

How can businesses comply with pending regulations when adopting artificial intelligence?

Leaders should know the implications of existing rules and the steps they can take to create their own AI code of conduct to succeed in the AI race.

AI and Data Privacy Regulations

While there is no international AI code of ethics as of 2023, some existing regulations can impact the corporate use of AI. Various nations and states have enacted or updated data privacy regulations over recent years, such as COPPA in the U.S. and GDPR in the EU. COPPA goes back to 1999, but GDPR is a more recent response to international data privacy concerns.

AI Data Privacy in GDPR

The EU’s GDPR regulatory framework is widely considered the world’s most comprehensive data privacy law today. The regulations in GDPR do impact AI already, although the European Parliament has specified AI needs its own set of rules, as well. GDPR puts extensive restrictions on the collection and use of personal data, which can impact the training and use of AI.

The EU’s GDPR regulatory framework is widely considered the world’s most comprehensive data privacy law today. The regulations in GDPR do impact AI already, although the European Parliament has specified AI needs its own set of rules, as well. GDPR puts extensive restrictions on the collection and use of personal data, which can impact the training and use of AI.

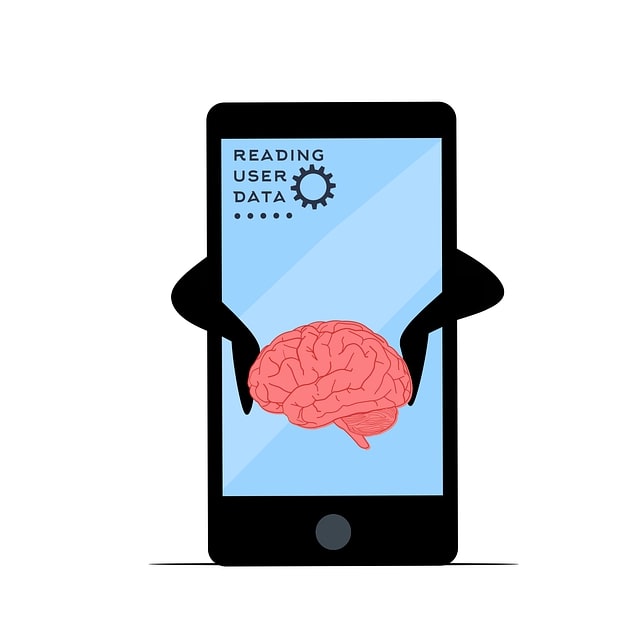

All AI models require some form of training through machine learning. This requires an extensive data set for the algorithm to learn from. In regions with strict data privacy laws — such as the EU — organizations may be unable to use any individual’s data to train an AI model without their consent. This is one of the clearest existing GDPRs that applies to AI.

CCPA’s Impact on AI

Enterprises in the U.S. may also be subject to federal and state data privacy laws that can apply to AI training. For example, the California Consumer Privacy Act (CCPA) regulates using California residents’ data. Like GDPR, its regulations limit the use of personal data in machine learning training.

Enterprises in the U.S. may also be subject to federal and state data privacy laws that can apply to AI training. For example, the California Consumer Privacy Act (CCPA) regulates using California residents’ data. Like GDPR, its regulations limit the use of personal data in machine learning training.

Additionally, CCPA protects the use of inference-collected data, which can use AI. For example, when Netflix recommends shows to users, it uses AI to analyze their viewing history and decide what they might like. CCPA protects collected and inferred personal data, giving consumers the right to request the deletion of that data.

The main takeaway from these major data privacy regulations is the importance of transparency and explainability. Companies must be transparent about when and how they use personal data for AI applications. They also need to be able to keep track of how the AI is using and inferring personal data to ensure criminals are not violating consumers’ privacy.

Can AI-Generated Content Be Regulated?

More and more businesses are investing in generative AI today. It has some highly appealing use cases across numerous industries. However, is there an AI ethics code for those use cases?

Plagiarism and copyright are significant issues in generative AI. Organizations might accidentally use copyrighted material in AI-generated content without careful research and documentation. Copyright can be particularly confusing for businesses since there is no clear ruling on whether or not AI-generated content is considered original.

World leaders are taking steps to resolve this issue and provide much-needed guidance. One landmark proposal under consideration in the EU will require AI developers to disclose when their algorithms use copyrighted material. With a regulation like this, enterprises could verify they only use generative AI tools trained on legally obtained, copyright-free content.

Building an AI Code of Conduct

How can companies ensure they respect consumers’ data privacy and copyright laws without comprehensive AI regulations? They can build their own AI code of conduct to demonstrate their commitment to their customers’ rights and confidentiality.

How can companies ensure they respect consumers’ data privacy and copyright laws without comprehensive AI regulations? They can build their own AI code of conduct to demonstrate their commitment to their customers’ rights and confidentiality.

It is possible to do this without hindering AI adoption, as well. In fact, sticking to an AI code of ethics can help direct businesses toward the best applications for AI.

Using Case Transparency Is Paramount

One of the first things to include in an AI code of conduct is a commitment to transparency. A lack of transparency is one of the top concerns surrounding AI today. Most AI and machine learning models are not explainable, meaning users and developers can’t see how the algorithm’s logic works.

One of the first things to include in an AI code of conduct is a commitment to transparency. A lack of transparency is one of the top concerns surrounding AI today. Most AI and machine learning models are not explainable, meaning users and developers can’t see how the algorithm’s logic works.

This isn’t necessarily a problem unless a business is using AI in a way that could negatively impact consumers. For example, global hiring is not a good application for AI since it requires compliance with data privacy regulations — which 70% of the world have — and non-discrimination laws. Racial and gender bias in AI has caused issues with hiring algorithms.

On the other hand, many people are happy to let an AI analyze their video streaming patterns to recommend new shows and movies. The difference here is the AI’s possible negative impact on the consumer is relatively low. In contrast, hiring AI could unfairly cost someone a job opportunity.

Enterprises need to be transparent about their use of AI regardless of the risk level or the extent of regulations. Transparency is key to ensuring consumer trust. It can even give businesses an advantage over competitors. Consumers who care about their data privacy will be more likely to spend money on a company that uses AI and data respectfully.

Transparency will also help organizations comply with AI regulations as they go into effect over the coming years. Government agencies and consumers don’t generally want businesses to avoid AI altogether — they simply want to know how and when AI is in use. Clear communication on this front allows enterprises to demonstrate compliance and build trust.

Working to Improve AI Logic Visibility

Data bias results from the AI black box, which refers to the inaccessible logic processing hub of AI models. Black box AI poses a major issue regarding regulations and compliance. Since companies can’t see how the AI is coming to its conclusions, they can’t 100% guarantee the algorithm isn’t mishandling consumer data.

Data bias results from the AI black box, which refers to the inaccessible logic processing hub of AI models. Black box AI poses a major issue regarding regulations and compliance. Since companies can’t see how the AI is coming to its conclusions, they can’t 100% guarantee the algorithm isn’t mishandling consumer data.

Black box AI models are also prone to replicate biases against people of colour, women and other minority groups. Since the AI’s logic pathways are inaccessible, these biases can easily go undetected unless they show up in blatantly obvious ways.

Businesses can handle these issues by recognizing the importance of logic visibility in their AI ethics code. They can invest in explainable AI research and new, more transparent AI models. Organizations can also try thoroughly screening algorithms for signs of bias and discrimination before applying them to any operations-related use cases.

Avoiding High-Risk Applications

There are certain AI applications that business owners should be cautious about utilizing — especially while regulations are still in development. Generally, companies should avoid trusting AI completely, regardless of the application. AI models can be biased, return inaccurate or misleading information, and negatively impact customers. AI can be helpful, but humans should still make final decisions.

Complying With An AI Code of Ethics

GDPR, CCPA and other existing data privacy laws can include AI. Additionally, many new regulations are developing worldwide to impose more precise AI regulations. Businesses can ensure compliance by building their own AI code of conduct that prioritizes transparency, consumer trust and ethical use cases.

Author Profile

- Online Media & PR Strategist

- As a London-based Chief Marketing Officer at the digital marketing agency ClickDo Ltd I blog regularly about London, lifestyle, technology, business and many more topics.

Latest entries

London DirectoriesApril 17, 2026Top 5 Lead Generation Tools & Services for London SMEs

London DirectoriesApril 17, 2026Top 5 Lead Generation Tools & Services for London SMEs Key ServicesApril 17, 2026Top 5 Electricians in London & South England

Key ServicesApril 17, 2026Top 5 Electricians in London & South England HospitalityMarch 30, 20269 Top London Swingers Clubs Worth Exploring

HospitalityMarch 30, 20269 Top London Swingers Clubs Worth Exploring Key ServicesMarch 19, 2026Top 15 Licenced London Rubbish Removal Companies

Key ServicesMarch 19, 2026Top 15 Licenced London Rubbish Removal Companies